4D long text speaks through the Polkadot architecture

Mr. Liu Yi discussed in depth the status of DApp development, the three expansion ideas of the public chain, the process of Ethereum to Serenity, the new journey of Gavin Wood, Polkadot, the comparison of Cosmos and DApp, the comparison of network topology, and the analysis of “cross-chain”. 9 aspects of next-generation DApp development technology selection.

The main title of this sharing is Polkadot architecture analysis, and the subtitle is a review of the next generation DApp development technology. In fact, the subtitle can summarize this sharing, because we not only discuss Polkadot, but rather a relatively comprehensive review of the platform-type public chain, including Ethereum 2.0, Cosmos, etc. Of course, Polkadot is the focus.

I want to make clear the direction of DApp development technology. This is one of the core issues in the development of the blockchain industry. It is not only important for developers, but also affects other industry players. Therefore, I try to be as straightforward as possible, so that the audience of non-technical background can understand it.

- Twitter Featured: Bitfinex plans IEO to issue platform coins, raising $1 billion

- Multi-space Discrimination Method of Digital Currency: Cross Judging Method of MA7 and MA30 of Day K

- Broken value creation chain: the value leakage of the securities pass agreement

1. Why is DApp important?

I start with the DApp itself, because the ins and outs are long. Finally, I decided to talk briefly, otherwise it would be logically incomplete.

DApp is a Decentralized Application decentralized Internet application. For example, Bitcoin is a DApp, which is a decentralized value-storage cryptocurrency. The concept of decentralization is more complicated. V God has an article stating that decentralization has three dimensions of structure, governance and logic. You can look it up.

From the user's point of view, decentralization can be easily understood as not being controlled by individual or a small number of participants, so it is a trustworthy application attribute. Blockchain is the mainstream technology for implementing DApp, or blockchain is the infrastructure of DApp.

The blockchain mentioned in this sharing, if not specified, refers to the public chain. The difference between DApp and ordinary Internet applications lies in D decentralization. So why is decentralization important? Why is it worthwhile for many IT Internet practitioners to participate? Is it a pseudo-concept pseudo-concept?

The most clear answer to this question is Chirs Dixon, a partner of a16z, who published an article entitled "Why Decentralization Matters" in February 2018, which is why decentralization is important.

To understand his point of view, the first thing to understand is what is the network effect. Network effect refers to the mechanism by which the utility of a product or service increases as the user grows.

For example, WeChat, the more people use it, the more powerful it is, the more indispensable it is. The core of Internet applications is to establish and maintain network effects. The businesses of giants such as Google, Amazon, and BAT have all built strong network effects, making it difficult for latecomers to overcome.

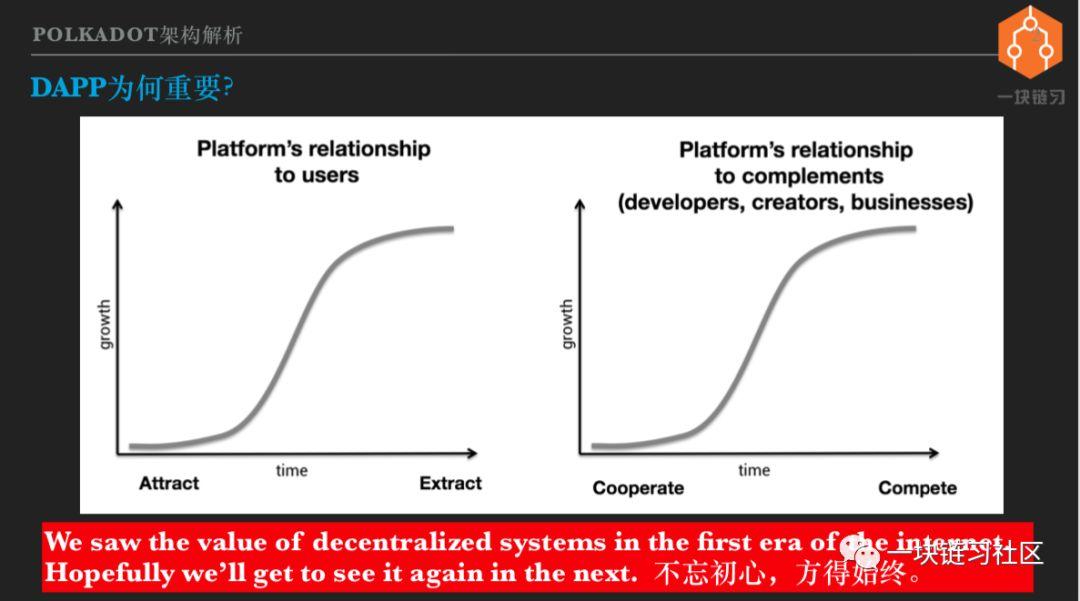

Chirs believes that Internet platforms need to do everything possible to attract users, attract developers and businesses, and so on. But after breaking through the key scale, the platform is becoming more attractive and its control is getting stronger and stronger.

For example, if you are doing e-commerce now, if you don't rely on Tmall, Jingdong or WeChat, it is almost impossible to succeed. Because they have formed a huge network effect, both users and merchants are locked out. The operators of the Internet platform are all enterprises, and the mission of the company is to maximize profits.

When the user and the merchant are inseparable from the platform, the relationship between the platform and the user's merchant changes. Let's look at the picture above. The platform is initially to attract users. After forming a network effect, we start to make money from the user's head.

The platform and the developers, content creators and merchants also gradually move from cooperation to competition. For example, everyone knows that Baidu search results are not sorted according to the authenticity and importance of the information, but whoever gives more money is ahead.

At the earliest, Baidu contacted various companies extensively, so that everyone could submit the information to him for the convenience of users. Now if you don't pay, the company's official website can't be found on Baidu. In order to make money, Baidu diverted the patients to the Putian Department Hospital. However, domestic users know that this is still inseparable from Baidu, because Baidu has the most data and the users are most aware of it. It is not terrible to think about it.

DApp can change the monopoly of the Internet platform. Because the DApp is decentralized, an economy maintained by an open and transparent consensus. The greater the contribution of the participants of the network, the greater the corresponding rights, but no individual can control the overall situation.

Any party that wants to harm the interests of others will either not work or cause a fork. DApp can remain open and fair for a long time, so you don't have to worry about crossing the river to break the bridge. Everyone will do their best to participate in the construction and get a return. It is a bit like a social ideal that is distributed as much as possible and distributed according to work.

This is the real open network, the initial heart that the Internet should not forget. Therefore, many Internet big coffees have a special liking for DApp and the blockchain technology that realizes DApp, and have high hopes.

Second, the DApp development dilemma

The decentralized application carries the great ideal of focusing on the Internet, but its development status is very embarrassing. This is also clear to everyone, I will briefly mention it.

First of all, there are very few users. For example, predicting the market Auger, the star project in the DApp field, financing tens of millions of dollars, the development lasted more than three years, dozens of live users after the launch, and Auger is not a case.

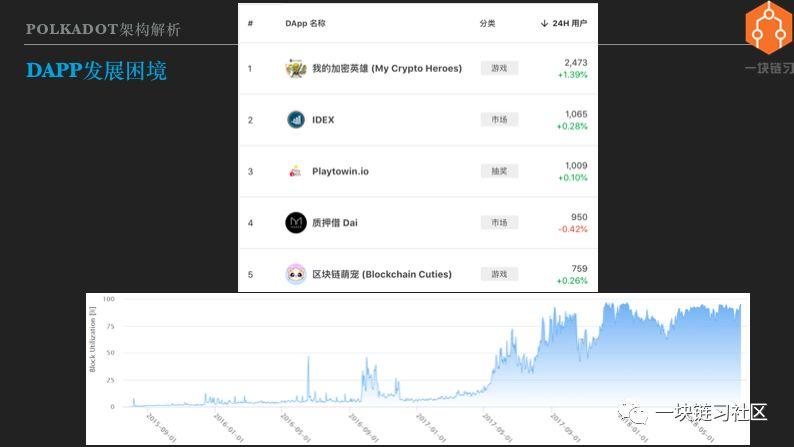

We look at the picture above, from DAppReview, the top 5 of Ethereum DApp daily users, the highest is only a thousand user level, and the Internet's top application daily users can reach hundreds of millions, the gap is 5 orders of magnitude.

Why is the DApp situation so poor? Mainly because the blockchain infrastructure is not strong, DApp has a high threshold and poor user experience. Just like Ethereum is a village-level road, the charges are high and congestion, of course, no one is willing to go.

The chart below shows the utilization of Ethereum. It can be seen that from the end of 2017 to the present, Ethereum has been operating at full capacity. That is to say, DApp is slow and expensive, but the infrastructure has gone all out and there is no room for improvement.

In this dilemma, it is impossible for DApp to break through the critical scale, create network effects, and compete with centralized Internet applications, so it is necessary to upgrade the blockchain infrastructure.

Third, slow and expensive reasons – blockchain extremely redundant structure

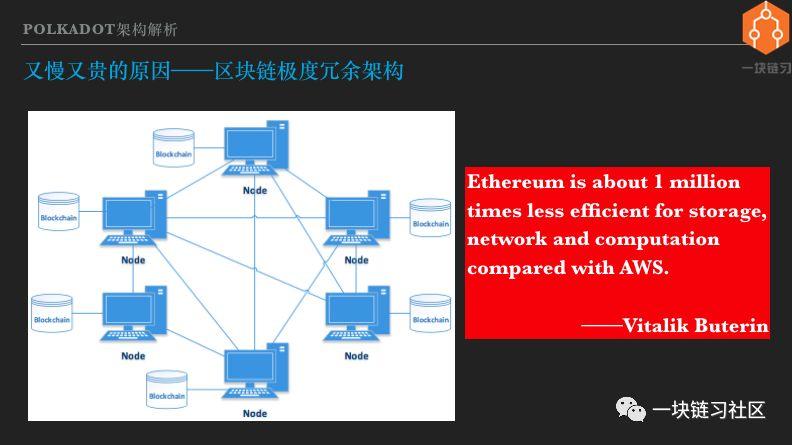

The slow and expensive source of DApp is the architectural limitations of the blockchain platform. This architectural limitation can be summarized as follows: Blockchain is an extremely redundant computing architecture.

Redundancy is a repetition that allows multiple computers to repeatedly perform the same calculations and repeatedly store the same data. Redundancy is intentional, not waste. Appropriate redundancy is common in both enterprise computing and the Internet.

The most typical is the master-slave structure. Two identical computers are one master and one standby, performing the same calculation and storing the same data. The host has failed and the standby is quickly on top. Although the two machines did one job, they increased the availability of the system.

But why is the blockchain extremely redundant? Because the blockchain pushes redundancy to the limit, all computers in the network, whether hundreds or tens of thousands. Both perform the same calculations and store the same data. The degree of redundancy is not additive. Extreme redundancy means extremely high costs and to what extent is the cost high?

V God gave the estimate that performing calculations or storing data on Ethereum is 100 million times more expensive than doing the same calculations or storing the same data on a commercial cloud platform. That is, the calculation that can be completed by spending 100 yuan on a normal cloud service, and putting it on the Ethereum requires a cost of 100 million. So when considering what business can be made into a DApp, you must consider the cost.

Don't just put a cat and dog on the blockchain just to tell the story, it is a huge waste of resources. So what is the benefit of spending 1 million times? High availability is certainly not a problem. Bitcoin or the Ethereum network, with computers joining or exiting at any time, has no impact on the business.

But high availability is obviously not enough, because it only needs moderate redundancy and does not require extreme redundancy. The new attribute that extreme redundancy brings to us is decentralization. Specifically, decentralization means trustless, permissionless, and censorship ressistancy to users, that is, trust, no license, and anti-censorship.

It is well understood without a license. Anyone who wants to use Bitcoin or Ethereum does not need to apply to others. The anti-censorship is also very clear, no one can stop you from using the blockchain. For example, WikiLeaks, the world's most powerful country hates it, wants to get rid of it, but WikiLeaks can still get Bitcoin donations.

The meaning is vague. To trust, English is trustless, trust free or trust minimal. I think the most accurate statement is that trust minimal trust is minimized. The use of decentralized applications implicitly implies trust in the blockchain network as a whole.

For example, using Bitcoin and Ethereum, it is necessary to trust Bitcoin and Ethereum not to be attacked by 51%. Use Cosmos and Polkadot to believe that malicious certifiers are less than 1/3. So the exact meaning of trust is that, without trusting the entire blockchain network, you don't have to trust individual miners or verifiers, and you don't have to trust counterparties.

For an application, if the user benefits from the three aspects of trust, no license and anti-censorship, it is worth 1 million times the cost, then this application is reasonable in the blockchain. Is there such an application? As far as I am concerned, the current value of this cost is only a value to store a demand.

The famous bitcoin maximist Jimmy Song said that bitcoin will succeed and that the currency and all the coins will fail. The reason is that centralized money can never be centered on the currency, and decentralized products can never do centralized products.

The underlying logic is that the same Internet service products cost a million times less, but of course they can't. His argument is justified, but it is too rigid. Because the cost difference of 1 million times is not inevitable, it can be changed and can be narrowed.

Can you reduce the cost gap between DApp and centralized Internet applications from 1 million times to 100,000 times, 10,000 times, or even 1,000 times? At the same time, it still maintains the three benefits of trust, no license and anti-censorship. The answer is entirely possible, as long as the degree of redundancy is reduced, the cost can be reduced. There are three types of methods, namely the three ideas of blockchain expansion – representative system, layering and fragmentation.

Fourth, the first type of expansion ideas – representative system

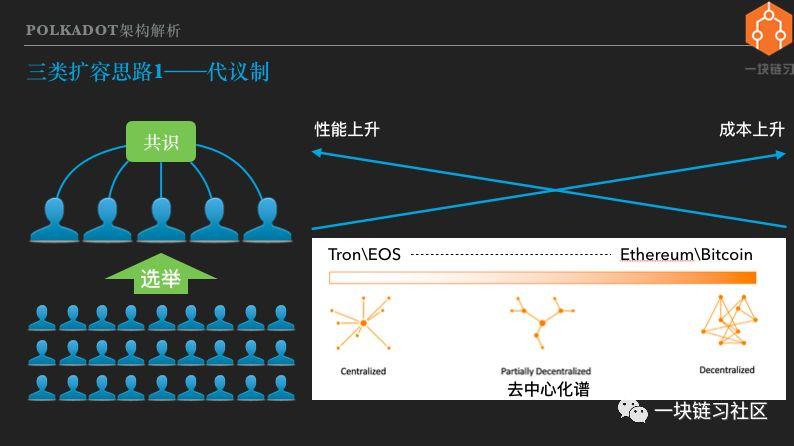

The first idea of expansion – representative system, stems from the ancient political wisdom of mankind. That is, democracy is good, but the direct democracy of the whole people is too inefficient. The Brexit has adopted a referendum to decide, but obviously not all issues are subject to referendum.

The representative system is that the people choose representatives, and then the representatives will agree on laws or major resolutions. There are two reasons for the representative system to improve decision-making efficiency. The first is that the number of people participating in the consensus is greatly reduced. The second is that the representatives are usually full-time politicians who have more resources and knowledge to negotiate national events.

The blockchain expansion is done in a representative manner, the most typical of which is the EOS with DPoS consensus. The holder of the EOS Pass selects the super node and 21 super nodes take the block out. Compared with Ethereum, the number of computers participating in the consensus has dropped by three orders of magnitude.

Moreover, the computing power of the nodes in Ethereum is uneven, and the protocol parameters must be set in consideration of low-end computers. The EOS super node host hardware configuration and network bandwidth have the same high requirements. So it's no surprise that EOS can reach thousands of tps, much higher than Ethereum.

EOS has been at the forefront since its birth. Some people in the encryption community have severely criticized EOS, saying that it is centralized, and even thinks it is not a blockchain at all. Proponents believe that the degree of decentralization of EOS is sufficient. Users still enjoy the benefits of trust, no licensing, and anti-censorship.

So is the decentralization of EOS sufficient? My opinion is that there are some situations that are enough and some cases are not enough. Depending on what application is used and who is using it.

Users and users are very different. From the nationality, there are Americans, Chinese, Iranians, Koreans, and so on. There are also differences in gender, age, race, geography, occupation, religion, and so on.

The other is a specific user, his needs are also diverse, such as social, entertainment, finance, collaboration and so on. The big category is divided into many small categories. In the financial sector, there are only value storage requirements for money, large transfer requirements, and small payment requirements.

If you are using most of your cryptocurrency for long-term value storage, I prefer Bitcoin. If it is a small payment, or playing mahjong, rolling a dice, of course, no problem with EOS. In the blockchain world, from the decentralized Bitcoin and Ethereum to the least centralized EOS and wavefields.

Can be seen as a decentralized spectrum decenralization spectrum. Each public chain, including Polkadot and Cosmos highlighted later, has a specific position in the spectrum and has the opportunity to be tailored to specific needs. There is no one chain fit all. The possibility of a chain hitting the world.

Doing architecture design is a compromise. If you choose, you will have to give up. The core idea of this sharing is that the future blockchain world is heterogeneous and multi-chain coexisting. Of course, I don't think I need hundreds of thousands of public chains, because there are not as many reasonable alternative locations. In the case of similar positioning, the network effect will eliminate the weak.

Fifth, the second expansion idea – stratification

Layering, also known as Layer 2 expansion or chain expansion, involves placing a portion of the transaction outside of the blockchain while still ensuring transaction security. Layered stateful channels and sidechains. There is also a type of two-tier technology that transfers computationally intensive tasks to chain execution, which has nothing to do with sharing topics and is not mentioned.

State channels and side chains are different technical metaphors, but at the implementation level, they are very similar. Because Cosmos has a deep inner connection with the side chain, I spend some time here about the principle of the side chain.

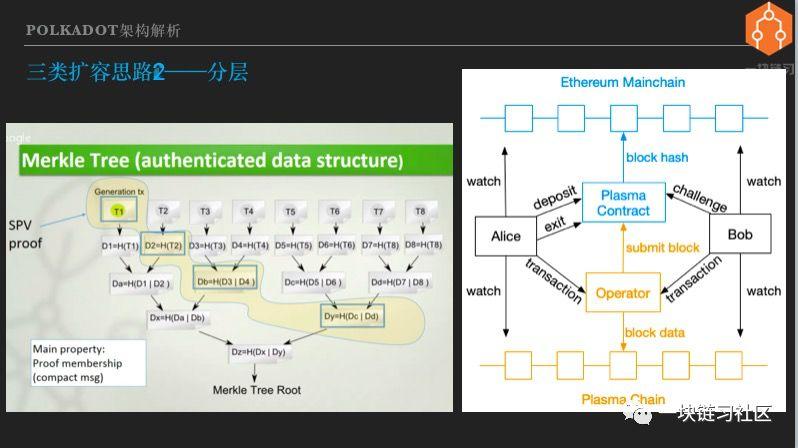

To understand the sidechain, first understand the SPV proof that SPV is the abbreviation for Simple Payment Verification. In order to enable the use of bitcoin in devices with limited computing and storage capabilities, SPVs, or light clients or light nodes, are available.

The mobile wallet is a light client. It does not have to synchronize all the blocks. It only needs to synchronize the block headers, and the amount of data transferred and stored is reduced by 1000 times. The diagram on the left is the principle of the SPV proof, using the Merkel tree. It doesn't matter if you don't understand it, just remember that the Merkel tree is the most important data structure for the blockchain.

It can be used to store a small amount of data, and it can prove that a large number of facts have occurred and belong to a specific set. In the case of a blockchain, only the block header is stored, and in the future it can be verified whether the transaction exists in a certain block.

The side chain scheme is to lock the main chain assets pass, and correspondingly create a pass acceptance bill on the side chain. The bill lottery transaction is executed in the side chain, and the person who gets the bill of exchange on the side chain can exchange the main chain pass. Specifically, look at the Ethereum Plasma MVP sidechain solution on the right.

First, deploy the Plasma Smart Contract on the Ethereum main chain, assuming both Alice and Bob sidechain users. Alice initiates a main chain transaction to deposit the pass into the Plasma contract, which is locked by the contract.

The side chain operator finds that Alice has deposited the pass, and will create a sidechain pass in the side chain, which is the acceptance bill of the main chain pass. Please note that the sidechain is also a blockchain, which has its own consensus agreement and miners .

The consensus adopted in the side chain of the Plasma MVP scheme is the PoA authoritative proof that an Operator has the final say and it is billed out. PoA is certainly not the only option, and the Lebo's Plasma sidechain uses the DPoS consensus.

Once deposited, Alice can use the pass in the Plasma MVP chain for payment or transfer. For example, she can play games with Bob, win or lose the pass, and may soon play a lot of games, resulting in a lot of transfer transactions. Sidechain trading only requires side chain nodes to reach a consensus. The size of the sidechain is usually much smaller than the main chain, so the transaction is executed faster and the cost is lower.

The block header of the sidechain block is submitted to the Plasma contract of the main chain by the Operator. Regardless of how many transactions are included in one block of the side chain, whether it is 1 thousand or 10,000, only the first transaction in the record block occurs in the main chain. So the Plasma contract on the main chain is equivalent to the side-chain SPV light node, which stores the block header so that it can verify the existence of sidechain transactions.

For example, Alice forwards the pass to Bob on the side chain, and Bob can send a request to the Plasma contract, including the SPV proof of the sidechain transaction, indicating that Alice has given me the pass.

The Plasma contract verifies that the transfer transaction does exist in the sidechain to meet Bob's withdrawal requirements. This example illustrates how a tiered approach can move a large number of transactions to a chain, or to a Layer 2 network.

Sixth, the third expansion idea – fragmentation

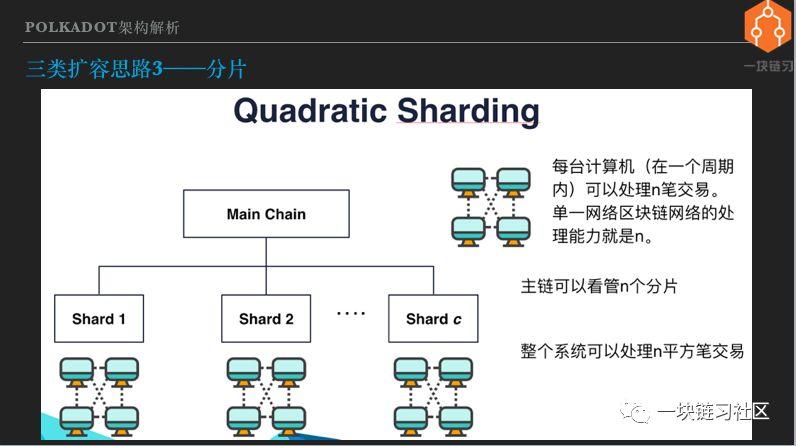

The third expansion idea is fragmentation. The principle is very simple, that is, don't let all nodes execute all transactions. Divide nodes into groups, or divide them into many pieces. Multiple shards can process transactions in parallel, and overall processing power is improved.

Of course, you need a special chain to look at all the shards. This is generally called the main chain. It has to do a lot of work, which will be described in detail later. The rough explanation is that if there is no main chain and there is no connection between multiple fragments, it is a completely independent blockchain, which has nothing to do with expansion.

The basic idea of fragment expansion is very simple, but in reality it faces many complicated problems. In order to understand the several public chain architectures to be analyzed later, you should first understand these problems. In addition, because this public chain adopts the PoS consensus, we discuss the fragmentation problems and solutions based on PoS.

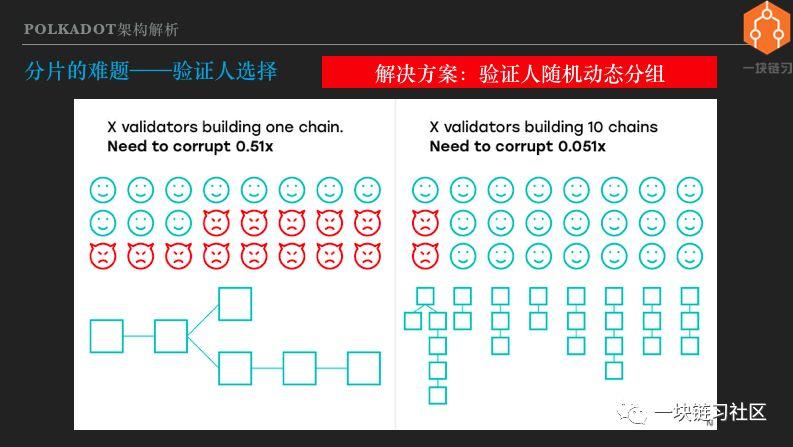

Seven, the problem of fragmentation – the certifier chooses

The first is after the shard, each shard requires a set of certifiers. Let's take a look at this diagram.

If there are more than half of malicious certifiers on a single chain, you can attack the system. After sharding, as long as it occupies a majority within a shard, it can attack this shard. Therefore, the more the film is divided, the lower the attack cost, that is, the lower the security.

The solution is that the certifier grouping of the shards is not fixed, but is randomly selected and regrouped at regular intervals. Such malicious certifiers cannot know in advance which group they are assigned to, and rashly send attacks will be punished, so the security of the system will not decrease linearly with the number of shards.

The key to the random dynamic grouping of verifiers is to have reliable random numbers, which have always been complex and interesting problems in computer science. The decentralized Byzantine fault-tolerant generation of reliable random numbers is very difficult and is also a hot issue in blockchain research.

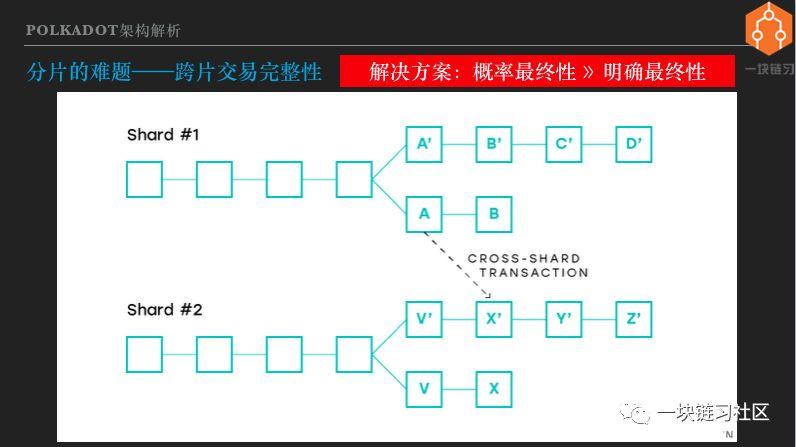

Eight, the problem of fragmentation – cross-chip transaction integrity

In a sharding scenario, one or more DApps can be run on each shard, regardless of whether the DApps are not in the same shard. The first thing to be clear is what is cross-chip interoperability? Because shards are also blockchains, cross-slices are equivalent to cross-chains.

Everyone knows that the blockchain can be seen as a state machine for distributed consensus maintenance, and the state machine completes the state transfer through transaction execution. Cross-chain interoperability should trigger a state transition between the two parties, that is, both interoperable chains execute transactions, and the state after execution of the transaction is consistent.

Or a cross-chain transaction causes a change in the state of two chains or even multiple chains, and these changes are either successful or unsuccessful, and there is no intermediate state. This is very similar to the concept of distributed transactions in enterprise computing.

However, participants in traditional distributed transactions are usually multiple databases, while participants in cross-chain transactions are multiple blockchains. Students with non-technical backgrounds may not be familiar with the concept of state machines and distributed transactions. Because the concept of cross-chain trading is important to understand the conclusions of this sharing, I will explain it in non-technical language.

Suppose you want to transfer 10,000 yuan from the ICBC account to the CCB account. The transfer transaction is actually 10,000 yuan from the ICBC account and 10,000 in the CCB account. ICBC and CCB each have a database storage account balance, then there must be a mechanism to ensure the operation of the two databases, one plus one minus, in any case either succeed or fail.

If there is no such guarantee, the ICBC account will be reduced, and the CCB account will not be added. If you have lost 10,000 yuan, you will definitely not do it. If the ICBC account is not lost, the CCB account is added, and you have 10,000 more, the bank will definitely not do it.

This is called the integrity or atomicity of distributed transactions. Simple? In fact, it is very difficult to do it, because no matter which ICBC CCB has a power outage, network disconnection, software crash, etc., all kinds of extreme conditions must ensure the integrity of the transaction. On the blockchain, the transfer becomes a pass.

A pass is issued on the A chain, and 10 passes to the B chain through cross-chain. After the cross-chain transaction is completed, 10 passes on the A chain are frozen, and 10 passes are added to the B chain. These two state changes under either conditions, either succeed or fail.

Since blockchains may be forked, cross-chip transactions are more complex than traditional distributed transactions. Let's look at the picture. If the part of the slice transaction on slice 1 is packed in block A, it is packed on slice 2 by the X' block. Both shards may be forked, and the A and X' blocks may become obsolete. That is, the cross-chip transaction may partially fail partially and the integrity is destroyed.

How to solve this problem? Let us analyze that the root cause of the integrity of cross-chain transactions is that multiple parts of the transaction are packaged into blocks, but the chain can be reorganized and the blocks can become lone blocks.

To put it bluntly, the transaction is in the block, but it is impossible to rely on it. It is possible to repent. The official statement is that there is no clear finality. The final finality is that the block must be included in the blockchain.

In the bitcoin blockchain, the more blocks behind a block, the less likely it is to be reversed or discarded, but it can never be 100% determined, so it is called probability finality or gradual consistency. Sex. The solution to this problem is to have a mechanism for the block to have a clear finality and not to be vague.

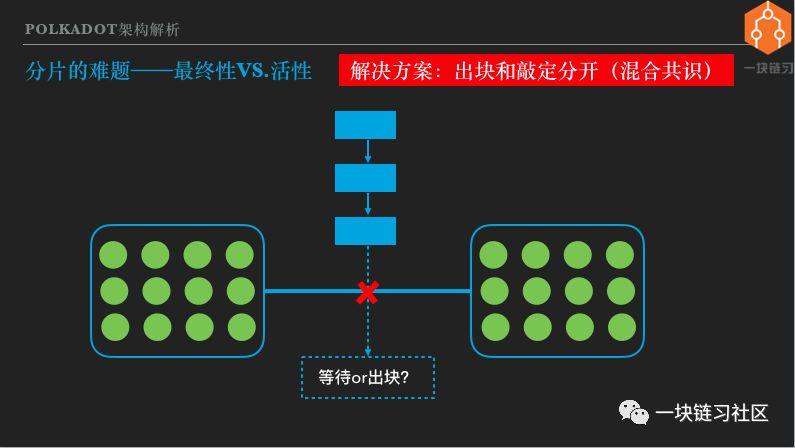

Nine, the problem of fragmentation – the ultimate VS activity

Finalize is to make the block final, I translate it into a finale. To make the block final. The simple way is to finalize the block. This is the case with Cosmos's Tentermint consensus. However, this practice will cause problems in special circumstances.

Looking at the picture, the blockchain of a Tendermint consensus was originally exported. Suddenly the submarine cable was broken and the Internet was divided into two parts. Both parts contain a generic verifier node. The Tentermint consensus requires more than two-thirds of the certifier's signature to be collected.

After being disconnected, the two parts of the network collect up to half of the verifier signatures, so the block stops, or the blockchain loses its active liveness. Some people think that this can be tolerated. If it is a special situation, then stop and wait until the network returns to normal before continuing work.

The submarine cable is broken, and the Internet, telephone calls, and video conferences are all affected. Why can't the blockchain be suspended? Others believe that stopping the block is unacceptable and always maintain the activity of the blockchain. then what should we do? The solution is to separate the block and the finalization, also known as the hybrid consensus.

In the case of the network interruption just mentioned, in two separate networks, the node can continue to block, but there are not enough certifiers to participate, so it cannot be finalized. When the network is restored, it is decided which blocks are finalized, so that both activity and finality are achieved.

Moreover, the hybrid consensus allows individual nodes to take turns quickly. At the same time, the finalization process can be slower, allowing a large number of nodes to participate, ensuring decentralization, increasing the difficulty of attack and collusion, that is, ensuring security. So the hybrid consensus also takes into account performance and security. Both Ethereum 2.0 and Polkadot use a mixed consensus.

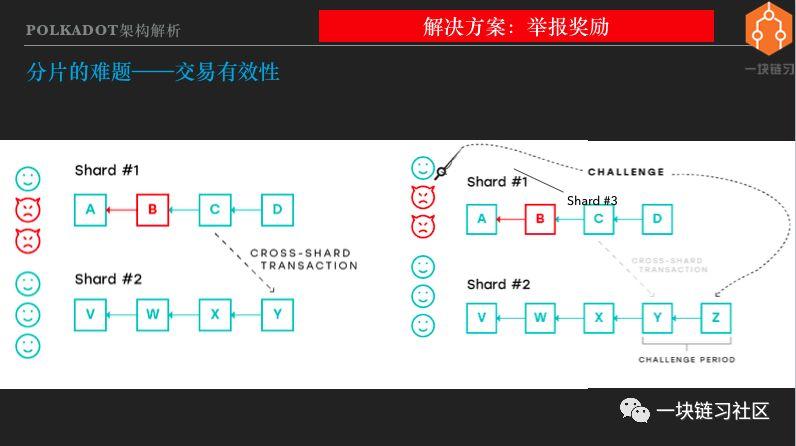

Ten, the problem of fragmentation – transaction effectiveness

There is also a fragmentation puzzle that is transactional effectiveness. The issue of transaction validity is to prevent invalid transactions from entering the block and becoming part of the historical truth of the blockchain maintenance.

For example, if I am a super miner, I have mastered most of the computing power. I want to fake a transaction and transfer the bitcoin on someone else's address. Can you do it? The answer is not to be done.

Because the transaction does not have a private key signature corresponding to the address, it is invalid, and the block containing the transaction is also invalid and will not be accepted by other nodes. Even if I master most of the calculations, I can dig out the longest chain, but only build a long fork.

Many bitcoin wallets and exchanges will not recognize my fork. So 51% attack, can not steal who btc, or create bitcoin out of thin air. At most, it is a double-flower attack. All in all, there is no transaction validity issue in the Bitcoin network.

So how can such a problem that was solved perfectly a decade ago? The reason is that the nodes of the public chain such as btc have all the data, so the validity of the transaction can be verified completely independently. Now that it has become multiple shards, the node only stores part of the data, and it is impossible to independently verify the validity of the transaction.

We look at the picture on the left, there are two shards, and the shard 1 has been controlled by a malicious certifier, and the invalid transaction is packaged in the B block, for example, creating a lot of pass for the address on its own. In the next block C, the attacker initiates a cross-chip transaction, and the pass to the DApp on slice 2 may be a decentralized exchange. There is no problem with the transactions in Block C seen by Slice 2, and Fragment 2 does not have data before Block C, so the validity of the transaction cannot be verified.

Below we introduce a solution to the effectiveness of the transaction in the fragmentation environment, called the report reward. In fact, there are other programs, but they have nothing to do with the theme.

Looking at the image on the right, Fragment 1 is controlled by a malicious verifier, but there is at least one honest verifier. Fragment 2 cannot verify the validity of cross-chain transactions, so choose to trust slice 1 and package cross-chain transactions. At this time, the honest node in the fragment 1 can jump out and report that the block B is illegal and I have evidence.

When the system accepts the report, it will punish the malicious certifiers in the shard 1 and confiscate the vouchers they pledge and provide rewards to the whistleblower. So why do some blockchains and certifiers have to wait for a few months to recover the pledge? The main reason is to allow enough time for reporting and confirming the report.

Above we introduced the four shards and the corresponding solutions. In fact, the problem of fragmentation expansion is more than that. It is limited to time and will not be enumerated.

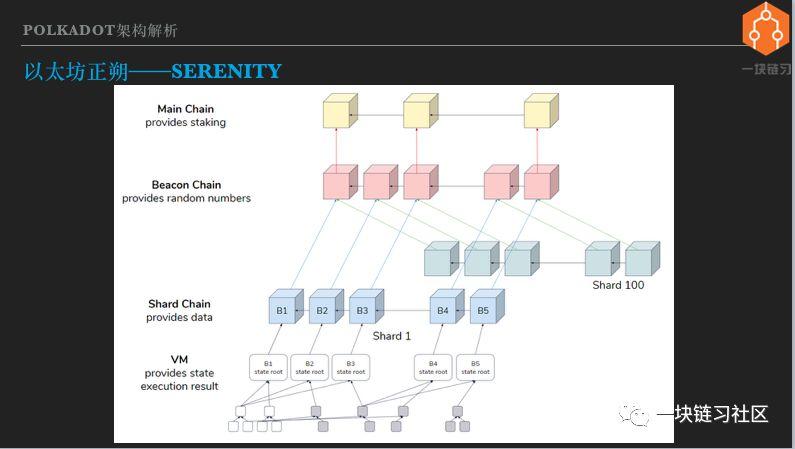

XI, Ethereum is right – Serenity

The idea of the next generation of Ethereum's layout1 expansion is fragmentation. Regarding the next generation of Ethereum, the information is very confusing, even the names are not uniform. There are Ethereum 2.0, Serenity, Shasper, Casper Ethereum, etc. We are collectively called Serenity.

Everyone saw the Serenity architecture map, which was produced by Taiwanese senior Ethereum researcher Wang Weiwei. From the top down, the top is the PoW main chain, which is currently running Ethereum. Serenity does not replace the PoW chain, but instead deploys it side-by-side.

But in the long run, Serenity does not rely on the Pow chain. The three layers below PoW belong to Serenity and correspond to the three phases of Serenity evolution.

The first is the Beacon Chain beacon chain, whose main function is to manage the verifier. Once the beacon chain is online, if you want to be a certifier for Serenity, move eth from the Pow chain to the beacon chain. Still using sidechains, the beacon chain deploys smart contracts on the PoW backbone.

The eth transfer to the beacon chain is unidirectional and can no longer be transferred from the beacon chain back to the PoW chain. By having eth on the beacon chain, plucking and running the node, you can become a verifier. In order to achieve full decentralization, the threshold for Serenity certifiers is very low, only need to pledge 32 ETH, the certifier collection will be very large, can reach the order of tens of thousands to hundreds of thousands.

The beacon chain is also responsible for generating random numbers for authenticating people and selecting people. The beacon chain implements the PoS Consensus Agreement, including its own consensus and consensus on all fragmentation chains, rewarding and penalizing the verifier. There is also a transfer station for cross-chip transactions. The beacon chain is expected to go online at the end of this year or early next year.

There are currently several teams working on beacon chain node software development, and several teams have deployed test networks. In the next phase, a public, long-running test network will be deployed, and the nodes developed by each team will be put together for testing.

Behind the beacon chain are multiple fragmented chains, and the picture is drawn with 100 fragments. The slice chain is seen as the data layer of Serenity, which is responsible for storing transaction data, maintaining data consistency, usability and activity, that is, ensuring that it can always be blocked and not locked. The launch time of the segmentation chain is still uncertain.

Below the fragmentation chain is a virtual machine, which is responsible for executing smart contracts and transfer transactions, changing the state, that is, reading and writing the fragmented chain data. A very important design decision for Serenity is to decouple the data layer fragmentation chain from the logical execution engine virtual machine.

Decoupling brings many benefits, such as split development, separate online or upgrades, and more. The Serenty virtual machine will use wasm to improve performance and support multiple programming languages.

How does Serenity talk about the four sharding puzzles mentioned earlier? The first is to manage the pool of certifiers on the beacon chain, randomly assigning a set of certifiers to each shard chain. A mixed consensus is used to verify that the person is out of the block and the Casper FFG is used to finalize the finality. Use the reward method to ensure the validity of the transaction.

Twelve, Gavin Wood's new journey – Polkadot

Sharing has arrived halfway, and finally it is the turn of the protagonist Polkadot. Gavin Wood is the soul of Polkadot. Most of his classmates know him very well. I don't know how to search the Internet. I won't introduce it.

Gavin Wood is the founder and current president of the web3 Foundation, and Polkadot is the core project of the Web3 Foundation. Similar to the relationship between Ethereum and the Ethereum Foundation.

Regarding web3, it is necessary to introduce it. In the project documents such as the web3 foundation and Polkadot, the textual representation of the web3 vision is not the same. But they all have two meanings.

The first layer: web3 is a serverless, decentralized Internet. Serverless No server is also decentralized, because in the network computing architecture of web3, the participants or nodes are equal, there is no difference between server and client, all nodes participate in the formation and recording of network consensus more or less. . What is the use of the decentralized Internet?

This is the second meaning of web3: everyone can master their identity, assets and data.

Mastering your identity means that no one else or organization is required to give identity, and no other person or organization can fraudulently use or freeze identity. Mastering your own assets means not being deprived of assets and being free to dispose of assets. Mastering your own data means that everyone can generate, save, conceal, and destroy personal data as they wish, and no organization can use their personal data without his permission.

The web3 vision is not unique to the web3 foundation or the Polkadot project. Many blockchain projects, including Bitcoin and Ethereum, have similar visions. The names vary, including open networks, next-generation Internet, and more. It's not important to call a name. You should think about the meaning of the web3 vision. Is that the Internet you want?

I said it: Be the change you want to see in the world. I translated it into: The world you want. If web3 is also your vision of approval, then get involved and work hard.

Polkadot is the backbone of web3, the web3 infrastructure, and the path to the web3 vision, as pointed out by Gavin Wood and the web3 foundation.

Substrate is an open source blockchain development framework developed during the development of the Polkadot project. It can be used to build the Polkadot ecosystem or to build blockchains for other purposes.

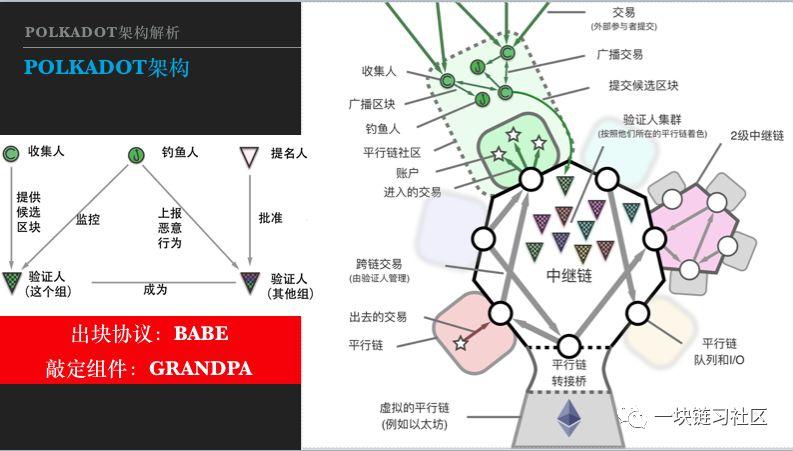

Thirteen, Polkadot architecture

Next we introduce the Polkadot architecture, both of which are from the Chinese version of the Polkadot white paper translated by Yue Lipeng.

Looking at the big picture on the right, the basic network structure of Polkadot is star-shaped, or called spoke type. At the heart of the star is the Polkadot Relay relay chain, surrounded by numerous Parachain parallel chains.

Looking at the small image on the left, participants in the Polkadot network have four roles: Validator certifier, Nominator nominee, Collator collector or checker, Fisherman angler or fisherman.

A DApp can be a smart contract deployed on a parallel chain, or a DApp across the entire parallel chain. The user initiates a transaction in the parallel chain, and the transaction is collected by the collector, packaged into blocks, and submitted to a group of verifiers for verification.

This set of certifiers is not from the parallel chain, but from the pool of certifiers managed by the intermediate chain, assigned to the parallel chain by random grouping. Each parallel chain has an exit queue and an entry queue. If the user initiates a cross-chain transaction, the transaction will be placed in the import and export queue. It is then placed in the entrance queue by the collector of the target parallel chain.

The collector of the target parallel chain executes the transaction, generates a block, and is finalized by the verifier group. Polkadot adopts a mixed consensus protocol. The English abbreviation for the block protocol is BABE, a child; the abbreviation for the finalization is GRANDPA, grandfather.

Just now, I talked about the mixed consensus. Some students may ask: If the block is fast and the block is slow, will there be more and more blocks waiting to be finalized? No, GRANDPA can finalize multiple blocks per round to catch up. Therefore, the child is lively and active, and walks lightly; the grandfather is a big stepping star and a word. One old and one small complement each other.

In addition to the parallel chain, there are two types of peripheral blocks that are linked into the relay chain. One type is the transfer bridge, which links existing, non-directly connected blocks into the relay chain, such as Ethereum and Bitcoin. From the perspective of the trunk chain, the adapter bridge is a parallel chain.

From the perspective of Ethereum or Bitcoin, the transfer bridge is a side chain. In addition, in order to make the system unlimited scalability, there can also be a secondary relay chain. However, the secondary relay is still only conceived, and there is no specific design.

We have already introduced the role of collectors and verifiers in the system. So what do the nominees and fishermen do? The nominee is the holder of the Polkadot Basic Pass DOT, who wants to pledge DOT to make a profit. But either because of the small number of DOTs or the lack of expertise in running the maintainer's node.

Therefore, the system provides another way of participation, that is, the holder of the currency chooses the certifier he trusts, pledges his DOT through the certifier, and shares the certifier's income. The advantage of this is to increase the overall pledge ratio, improve system security, and also make income distribution more fair. The economic model of Polkadot is a very complicated and interesting topic, we will not say more.

I just introduced the transaction validity of the fragmented architecture and the solution for reporting rewards, so the role of the fisherman is not difficult to understand. He is the role of monitoring and reporting illegal transactions and earning bonuses. It sounds simple, but it is extremely complicated to do.

Some students may imagine that the report is like this: Send an email to the web3 foundation: I found that someone has packaged an illegal transaction, and the evidence is attached to the attachment. After a few days, the web3 Foundation wrote back: Your report has been confirmed, the perpetrators have been deferred, the bonus will be sent to your address, thank you for your support of our work.

But the report on the blockchain is not exactly like this. The fisherman is a software process that monitors illegal behavior on the network and submits a report transaction to the blockchain once it is discovered. Reporting transactions also go through a consensus process, verified by more than 2/3 certifiers, packaged into blocks, and penalties and rewards are also blockchain transactions.

The entire process is performed automatically and decentralized. There are many complex issues here, such as how to provide incentives for fishermen. The fisherman is like a policeman. You might think that it is very simple. If you catch a bad person, you will pay a bonus.

Then there are a bunch of police officers staring at the network every day, no one dares to do evil, the police have never been able to get bonuses. The police have operating costs. To verify and store large amounts of data, they can't do without income. The police have all changed, and the bad guys will appear. Then you might think, pay the police, the basic salary plus the commission.

Ok, then I can declare myself as a policeman and get a basic salary. But I don't verify and store the transaction data at all, the cost is 0, and the basic salary is my profit. When the bad guys appeared, I said sorry, I didn't see it, or if my hard drive was just broken, how should the system punish me?

There is also the possibility of reporting it casually. The system handles the cost of reporting, and casual reporting becomes a loophole that can be attacked by dust. In addition, if you can report the report transaction, what should be done, etc. So in a decentralized environment, the reporting mechanism is complex. Polkadot's fisherman working mechanism, I have not seen specific instructions yet.

In the Polkadot network, the parallel chains each undertake transaction execution and data storage, and the parallel chains can interoperate, thus achieving the goal of fragmentation. So I see Polkadot as a fragmentation solution. You can compare it with Serenity. You will find that Polkadot is technically more complex than Serenity.

Serenity's shards are isomorphic, using the same consensus protocol, and the capacity is uniform. Just like the standard container that is available to DApp, the specifications are the same. The developer chooses a slice and puts his DApp in it.

Polkadot is a web3 backbone, and it cannot and should not require parallel chains to be uniform. The parallel chain can decide which consensus protocol to use, what kind of economic model and governance model to use, determine the hardware and network configuration, and so on. In short, parallel chains are autonomous, and Polkadot can be seen as a coalition or federation of parallel chains.

The Polkadot relay chain supports heterogeneous parallel link-in, which enables interoperability and complexity beyond Serenity's beacon chain. The benefit of this technical complexity is that the flexibility of parallel chain development eliminates the need for a chain and can design and develop optimal parallel chains based on specific needs and constraints.

Fourteen, the same way – Cosmos

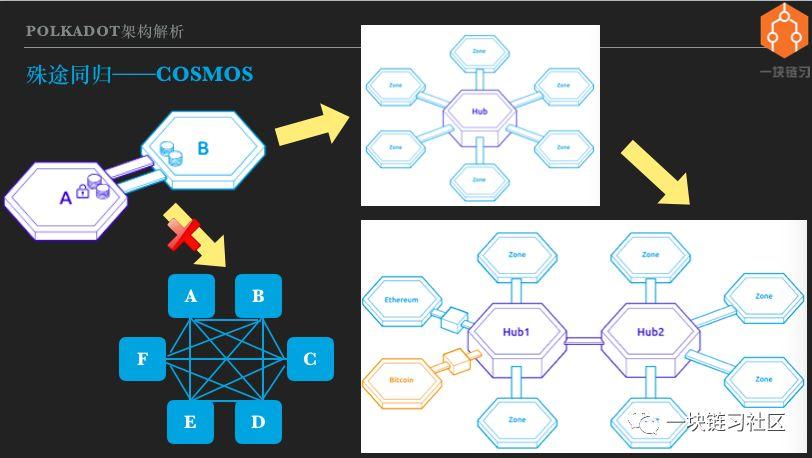

Let's introduce Cosmos, starting with the side chain. Looking at the picture on the upper left, A and B are the two chains that are side chains. That is, the A chain contains the SPV light client of the B chain, so that the A chain can verify the B chain transaction. In turn, the B chain also contains the SPV light client of the A chain, so that the B chain can verify the transaction of the A chain.

As a result of the sidechains, the certificates issued on the A and B chains can be interchanged. If you extend from two chains to multiple chains, A and B become A/B/C/D/E/F. An extrapolation idea is to still use the two sideways sidechains to form the lower left. The structure of the square.

However, there are a lot of problems in doing this. Each chain has built-in light clients of all other chains, and synchronizes the block headers of all other chains, which is of course a big burden. And every time you add a chain, you need to make changes on all other chains. As the number of blockchains increases, the complexity grows exponentially with n*(n-1)/2, which is obviously not feasible.

The solution is to change the structure shown in the upper right picture, put a Hub in the middle, Hub is also a blockchain, which is sidechain with all other chains. That is to say, the pass on each chain can be transferred to the Hub, and then transferred to other chains through the Hub. The complexity of the network interconnection is linear with the number of blockchains.

This is the practice of Cosmos. In the definition of Cosmos, the chain that accesses the Hub is called the Zone partition. There are two conditions for a partition to access the Hub. The first is to comply with the Cosmos standard protocol, the IBC inter-chain communication protocol. The second is to require Zone to have immediate endurance to ensure cross-chain consistency.

And Cosmos can support the interconnection of multiple Hubs. For the existing public chain, Cosmos Hub can be accessed through protocol adaptation. Cosmos refers to the protocol adaptation gateway as the Peg Zone anchor partition. The resulting structure is what the picture on the bottom right looks like.

We deduced the Cosmos architecture from the side chain. But looking back, the Cosmos partitions each undertake transaction execution, data storage, and interoperability between partitions, and achieve the goal of fragmentation. So I also think of Cosmos as a fragment expansion solution.

Someone may disagree with such a classification. But it doesn't matter, Cosmos is Cosmos, and the classification is to better understand it. From different perspectives and interpretations, different classification methods can be used, without absolute right and wrong.

The Cosmos Hub and other partitions developed with the Cosmos SDK use the Tentermint consensus protocol. The block-out and finalization process is one-of-a-kind. As long as the block is generated, it is verified by more than 2/3 of the certifier's signature. The advantage of this is that it is simple and fast, and the block time can reach seconds or even sub-seconds, and it has instant finalism.

However, in the case of network partitioning, the Tentermint consensus may suspend the block. Both the Cosmos Hub and the partition have their own set of certifiers, which do not require a dynamic random grouping of the certifier. So how does Cosmos guarantee the effectiveness of cross-chain transactions? According to my understanding, Cosmos avoided this problem.

The Hub does not verify the validity of the transaction and can only verify the existence. If a partition is controlled by a malicious certifier, the user's assets on the partition are unsafe and may be stolen. But this should not be seen as a vulnerability in Cosmos, but rather in its design choices.

Cosmos is often compared to Polkadot, but Polkadot is more similar in architecture to Serenity. The article of the Orange Book a few days ago made a metaphor for the three villages, very relevant. But from the perspective of DApp development, especially this year's and next year's heavyweight DApp development, it will mainly choose one from Polkadot and Cosmos.

Technically, Cosmos is much simpler than Polkadot or Serenity. The simplicity I am talking about here is not derogatory. Under the premise of meeting the needs, the technical solution should be as simple as possible. So Cosmos also achieved the purpose of fragment expansion with a relatively simple solution, isn't it good?

It's really good, so I am also very optimistic about Cosmos, it will be very suitable for some types of DApp. But as we have repeatedly stressed, there are gains that must be lost. Cosmos chose simplicity, but sacrificed security. The security level of the PoS blockchain is determined by the total market value and the pledge ratio.

After Polkadot went online, assuming that Dot's total market capitalization is $1 billion, half of which is pledged on the network, then a double attack on the Polkadot main network would theoretically require a minimum of $167 million, which would require much more. Obviously it is a huge sum, so the Polkadot network is very secure and cross-chain transactions are highly secure.

After the Cosmos main online line, Atom Pass will also have a high market value, but Atom, which is pledged by the network, only guarantees the security of Cosmos Hub. Partitions and other Hubs will issue their own certificates to build an economic model for security.

But partitioning is usually a specific decentralized application, and its application size and market value are not as high as that of Cosmos/Polkadot. Therefore, it can be expected that the security level of the Cosmos partition will be lower than that of the Cosmos Hub.

You perform cross-chain trading on Cosmos. You need to trust the originating partition of the transaction, the target partition and the Hub. If there are multiple Hubs in the middle of the cross-chain transaction, then each Hub that passes through should be trusted.

On the one hand, there are more blockchains that need to be trusted, and some of them may not have a high level of security. So Cosmos didn't minimize trust. The level of safety has decreased, but is it not enough after the reduction? Maybe it may not be enough, it will vary from person to person and from application to application.

Cosmos certainly understands its shortcomings and is said to provide security for partitions in future releases. But the implementation will be very difficult. To provide security for the partition, a large number of certifiers are needed. Only the consensus agreement can be modified to separate the block and the finalization. Of course, the random dynamic grouping of the verifier and the validity of the transaction should be dealt with. With this change, the complexity of Cosmos and Polkadot is almost the same.

Fifteen, support for DApp comparison

So which of Serenity, Polkadot and Cosmos is more suitable for DApp development? Let's compare it.

First of all, the DApp development method can use smart contracts for all three chains. Polkadot and Cosmos bring a new way to develop DApps, which is to develop blockchains for specific applications. Cosmos's tool for the application chain is the Cosmos SDK, which currently supports Go language development. Polkadot's tool for the application chain is Substrate, which currently supports Rust language development.

Substrate is a complete application chain development tool with a complete application chain framework. Gavin Wood demonstrated a 15-minute release of an application chain on a brand new computer. In addition, all Substrate modules can be customized or replaced, powerful and flexible.

In comparison, the Cosmos SDK is a bit thinner. It mainly provides core modules such as the Tendermint consensus engine, the IBC link communication protocol, and the pass. Most of the superstructures need to be developed by themselves.

Regarding performance, Serenity is about 100tps per shard, and it is still a priority for deals with high gas prices. The Polkadot Hub should be able to reach thousands of tps. The parallel chain can determine the consensus algorithm, hardware and network by itself. In theory, there is no performance limit. The Cosmos Hub and most of the partitions use Tendermint, which can reach thousands of tps.

About interoperability. Serenity is the same as Ethereum 1.0, and smart contracts can call each other. The Polkadot parallel chain interoperates with other parallel chains via Relay and interoperates with other chains via Bridge.

The Cosmos partition can exchange certificates through the Hub, and through the anchor partition and other chains. IBC messages are also data fields, just like attachments to emails. By extending data fields, data outside the pass can also be passed between partitions.

Access method Serenity is the same as Ethereum 1.0, developers deploy their own smart contracts. Polkadot Relay accesses the DOT for auctioning slots and pledges a large amount. Similar to Polkadot, Cosmos is eligible for pledge Atom auction access.

Then there is security. As mentioned above, Serenity's shards are like standard containers. DApp is put in, and security is guaranteed by the system. In contrast, Cosmos, the application chain, whether it is connected to the Hub, is self-safe. The application chain developed by Substrate is two options, either to connect Relay into a parallel chain, secured by Polkadot, or to operate independently and secure yourself.

Finally, the DApp upgrade, Serenity and Ethereum 1.0, does not support smart contract upgrades. Many people may be accustomed to this, but I think that not supporting upgrades is a big flaw, and it may bring serious security problems. The first is that the code is impossible without bugs.

Smart contract development languages such as Solidity are not friendly to formal verification. Even with formal verification, it is not realistic to achieve 100% logical path coverage. Secondly, DApp is an Internet application, and Internet applications should evolve on demand and iteratively.

Some people think that smart contracts are an agreement, so they can't be changed. In fact, real-world contracts have provisions that can be cancelled or amended by mutual consent. Imagine that the two companies signed the contract. Now both parties agree to modify the contract. As a result, the contract itself limits the unchangeable situation. How ridiculous it is.

Not to mention the code is the law, the law is not static, can be abolished can be revised, the code is not, isn't it strange? The result is ridiculous. On the one hand, DApp has a strong upgrade requirement; on the other hand, the platform does not support upgrades. So developers can find their own way, using the method of calendarCall and other rudimentary, do not have to be awkward to achieve upgradeable, but also known as the upgradeable design mode.

With this approach, developers can modify smart contracts without the user's consent, even without the user's knowledge. So what is the meaning of code as law? How can users know whether smart contracts can be upgraded or not, and which ones will change? You can only look at the code yourself. So it is no wonder that Ethereum has only so few users. Anyway, I am not qualified to use the Ethereum DApp. I have studied the contract of fomo3d, and I have not seen a random number vulnerability.

A small bug in Parity's multi-signature wallet contract locked up hundreds of millions of dollars in money and pitted himself and a lot of people. If you want to thoroughly research the code to trust and use DApp, then the world's DApp target users, probably thousands.

To develop a DApp that can compete with a centralized Internet application, upgrades are a must. And it must be an upgrade of the specifications supported by the platform, and it is impossible for DApp to show its magic. Analogy to the Android platform, mobile apps are often upgraded, but users must be informed and agree that the new version of the app adds permission requirements to be displayed to the user.

These are all platform-controlled, mobile app can only be followed can not be spared. DApp upgrades should be more standardized and more rigorous because DApps manage encrypted assets and have no trusted centers. Both Polkadot and Cosmos allow application chain upgrades, Cosmos partitions handle upgrades themselves, and Polkadot's parallel chains can be upgraded securely.

Polkadot how to achieve parallel chain security upgrade, I have not figured out. A few weeks ago, Gavin Wood spoke about the Trust Wormhole Trusted Wormhole, and I didn't understand it. Who knows this part of the content, I hope to give pointers to me.

All in all, it is very difficult to implement a standardized and secure application upgrade on the decentralized blockchain, but there is no other option, it must support the upgrade, and it is a standardized security upgrade of the platform guarantee. I think that in terms of scalability, only Polkadot's direction choice is correct.

Sixteen, network topology comparison

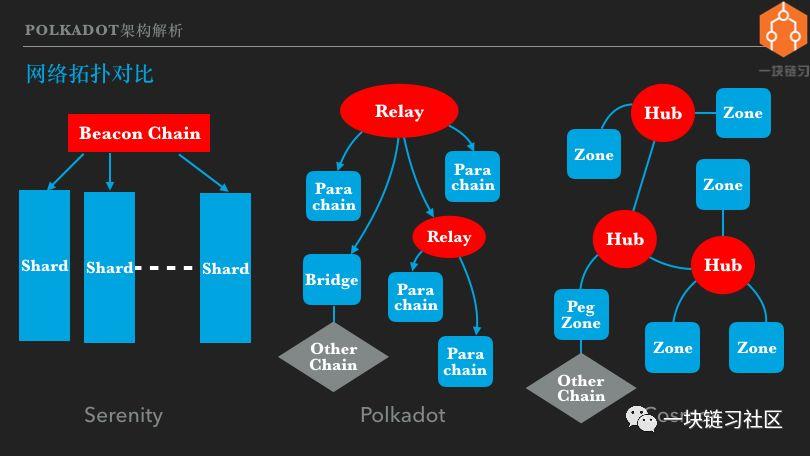

Let's talk about what kind of network topology will be formed after the three blockchain ecosystems of Serenity, Polkadot and Cosmos are fully developed. Note the logical network, not the physical network. In addition, it takes time to fully develop the network. The discussion here is about 5 years later, and some of the content is based on the speculation of the architecture design.

First look at the left side, the Serenity network is like an array of honor guards. The beacon chain is the standard bearer or the leader. Each column of the square team is a standard piece. Each shard can carry some DApps. The middle picture is Polkadot. The Polkadot network is a tree with a trunk chain that connects multiple parallel chains. Parallel chains can be a special business application chain or a DApp platform that supports smart contracts.

In addition, other types of blockchains can be relayed through the bridge. Analysis of the Polkadot architecture reveals that the number of parallel chains that a single relay can support is limited due to limitations such as the number of certifiers. It is probably on the order of tens to 100, and it is difficult to reach hundreds.

Of course, even if you reach a few hundred, you can't fully realize the Web3 vision. So Polkadot will support relay cascading in the future. That is, the primary relay accesses the root relay, and the secondary relay accesses the primary relay, thereby having unlimited expansion capability.

The picture on the right is Cosmos. Multiple Hubs in Cosmos can be interconnected, with each Hub connecting multiple partitions. There are also anchor partitions that dock other types of blockchains. The Cosmos network topology looks a lot like the Polkadot and is a tree structure. However, there is no question of who provides security to the Hub and Hub between Cosmos, so there is no hierarchy.

If the hierarchical relationship is considered to be the direction of the connection, then Polkadot is a directed acyclic graph and Cosmos is an undirected acyclic graph. In fact, the Cosmos network topology can be looped. It should be to avoid cross-chain message routing problems and choose a loop-free design.

In comparison, I think Serenity's growth pattern and resource allocation are a bit rigid. The system is top-down growth, supporting more shards through iteration. The DApp chooses which shards to face and faces some uncertainty. For example, if a DApp is very successful, what does it require to exceed the capacity limit of a single shard?

At present, there is no way to see it. In addition, when DApp goes online, you choose a relatively free shard. As a result, the D-App is particularly popular in the same piece of soil, so your users can only endure high costs and congestion.

A brief generalization is that blockchain computing resources do not allocate on-demand to DApps. The growth of Cosmos and Polkadot is bottom-up, with new application chains joining, application chain exits, and resource allocation.

The biggest difference between Cosmos and the other two platforms is that it does not share security, and to some extent sacrifices trust minimization, which has already been mentioned before. So Polkadot has both shared security and bottom-up organic growth, is it the best? Polkadot does have these advantages, but it also has its own disadvantages.

The biggest problem I think is that the access threshold for parallel chains will be high. According to the current announced auction plan, by the end of 2020, there will be only 24 access slots. If you develop a parallel chain and hope to go online next year, you have to compete with the global team for 24 places.

Of course, after the launch of smart contract platforms such as edgeware, the DApp threshold can be lowered to some extent. In contrast, deploying a DApp on Serenity has no threshold. Cosmos will be much better, because Cosmos Hub can support more slots, and there will be multiple Hubs in the ecosystem, creating a competitive market for the seller.

From a larger picture, the Serenity, Polkadot and Cosmos interconnections are feasible and will certainly happen. EOS and other blockchains using DPoS can also be connected, plus a two-layer network such as sidechains, and an interconnected network of heterogeneous blockchains will be formed.

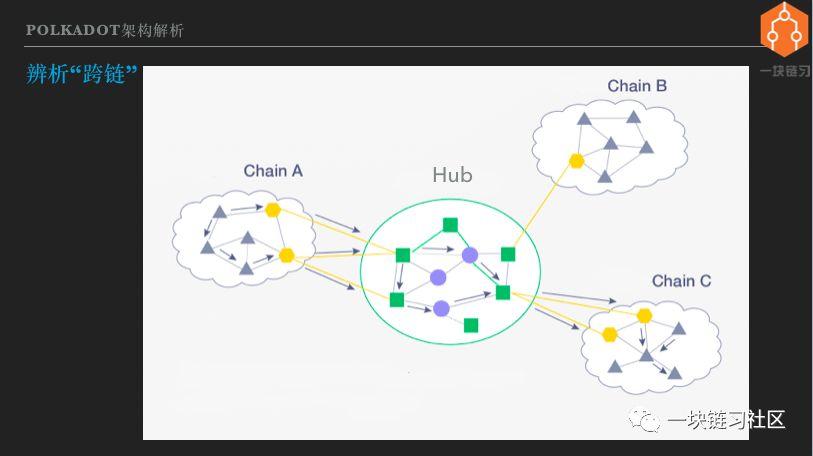

Seventeen, distinguishing "cross-chain"

This sharing is coming to an end, and we are beginning to discuss the concept of cross-chain. The reason is that I think the concept of "cross-chain" is ambiguous and misleading. At least I was misled for a long time. Previously, the materials about Cosmos and Polkadot were introduced as cross-chain solutions.

I just want to cross the chain? What can be done across the chain? Although there are hundreds of thousands of public chains now, how many are they useful? Bitcoin counts one, some will add Ethereum / EOS, some will add ZCash/Monero, anyway, it is three or five.

With so many useful chains, what can be crossed? The feeling is to take off your pants and fart – all in one fell swoop. So before I saw an article about Cosmos and Polkadot, I glanced at the title and didn't go in and see it.

Until Gavin Wood demonstrated Substrate in Munich last year, I realized that Polkadot is a new generation of public domain architecture that divides and conquers and is a new form of DApp. Since then, I have only begun to pay attention to this field.

The future picture of the blockchain envisioned by Polkadot and Cosmos is not a chain fit all. It is not a chain rule. They are a chain of stars. Interconnection is the ability to cross-chain transactions between blockchains, so cross-chain is the basic ability of the Internet of heterogeneous blockchains.

The addition of bitcoin, Ethereum and other public chains to the blockchain Internet is one of the results, not the full connotation of cross-chain. So my opinion is that there is nothing wrong with the concept of cross-chain, but if the understanding becomes cross-span, there is no focus.

Implementing a cross-chain transaction for a heterogeneous blockchain is defined as a cross-chain. So what do we say is not cross-chain, or what so-called cross-chain is on the market to sell dog meat. First, the cross-chain must be a consistent change in the state of two or more blockchains involved, all of which are write operations.

If one side is reading, the other side is writing, or it is based on the data of one blockchain to modify the state of another blockchain. This is not called cross-chaining. Of course, reading data from multiple chains is even more cross-chain.

There is also a requirement to not only achieve cross-chain transactions, but also cross-chain transactions should be trusted. Or take the example of Cosmos cross-chain transfer, from the A partition through the Hub to the 10 pass to the B partition, you need to trust the A partition, Hub and B partition three blockchain network.

Is the value of these three networks worthless? You need to judge for yourself. If the degree of decentralization is high, the total price of the PoS pledge certificate is high and it is difficult to be attacked. It is worth trusting. Some so-called cross-chain solutions, Hub itself is not a blockchain, but a gateway, then the gateway is trustworthy?

The answer is that the gateway is a computing device operated by a single entity. The premise of trust gateway is to trust the operating entity. For example, if we put money in ICBC and spend money through Alipay, there is nothing wrong with it. Both Ali and ICBC are trustworthy, so the gateways they operate are also trustworthy.

But no matter whether the gateway is trustworthy or not, it is not the cross-chain I talked about here. We are talking about cross-chaining, Hub uses distributed ledger technology, and decentralized operations to minimize trust.

Later, I will see the so-called cross-chain project, which can be resolved by itself, whether it supports decentralized cross-chain transactions of heterogeneous blockchains. If it can't be supported, then its cross-chain is not the same concept as Cosmos and Polkadot.

Eighteen, the next generation of DApp development technology

I personally think that there is only one DApp that has already landed, which is Bitcoin. Bitcoin is a decentralized value-storage currency, or digital gold. Because it is a value storage type, its performance requirements are very low.

In the next few years, decentralized payment settlement currencies and exchanges are likely to land. The value of the currency of the settlement currency should be linked directly or indirectly to purchasing power. So now usdt, tusd, JP Morgan Chase, as well as the future facebook currency, central bank encryption currency, are central. Can't do trust, no license, and anti-censorship.

Lightning Network and MakerDAO are important attempts and may lead to breakthroughs. Money, Borrowing, Asset Issuance, Asset Trading, Insurance, Derivatives… We are only a few real DApps from subverting traditional finance and changing the world. With the expansion of the blockchain and the infrastructure, DApp is likely to usher in a real outbreak.

How do programmers become Dapps, and we're going to sort out the next generation of DApp development technologies. Note that DApp is an internet application. The back-end, front-end, mobile, browser, and desktop technologies of Internet development are still valid, but they are not within the scope of discussion. We only talk about technologies that implement decentralization.

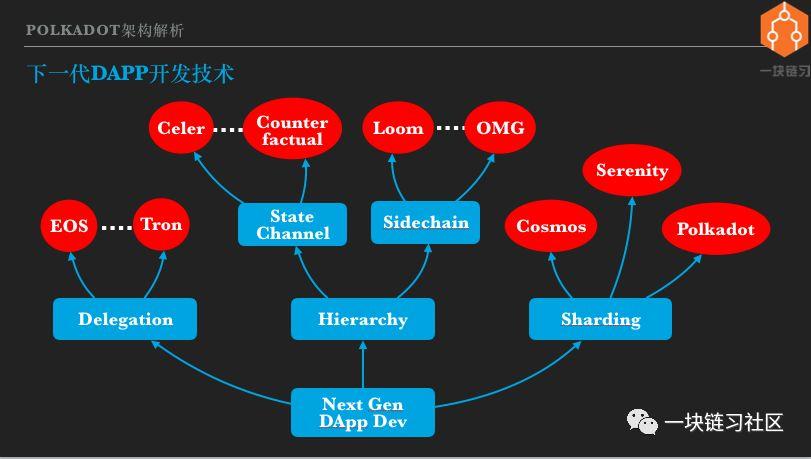

Three expansion ideas, each with multiple implementations, I only list representative projects. Representatives of representative system expansion are EOS and wave fields. The hierarchical expansion is divided into two branches: state channel and side chain. The scheme of the state channel includes Celer Network, Counterfactual, etc., and the side chain has Loom and OmiseGo. Three typical scenarios for sharding, Serenity, Polkadot, and Cosmos have been introduced one by one.

In this way, there seems to be a lot of next-generation DApp development technology. Actually not, DApp development is currently only a kind of smart contract. Smart contracts have two mainstream solutions, EVM and Wasm. The projects we have listed basically support EVM and will support Wasm now or in the future.

EVM's main development language is Solidity, which has formed an ecosystem around Solidity, including tools such as Truffle/Remix/OpenZepplin, a large number of technical materials, examples, community discussion and answering questions, etc., and there are quite a few programmers who will be Solidity language. So mastering Solidity ensures that DApps are developed on most public chains.

The only exception currently is EOS. EOS does not support EVM, but uses wasm in one step. Wasm will be the standard for future smart contract development, and can support the development of smart contracts in a variety of programming languages, including java\c++\go\rust and more.

Cosmos and Polkadot provide the second way to develop a DApp, which is to develop an application blockchain. The advantage of the application chain is that it has great flexibility compared to smart contracts. Developers can choose or customize consensus algorithms, governance models, economic models, etc., and configure hardware and networks based on actual needs.

But on the other hand, the cost of application chain development and operation will be significantly higher than smart contracts. For example, to deploy Cosmos's partition chain, you need at least 4 hosts, and you need to pledge a considerable number of Atom passes to access the Hub. It can be expected that a team of a certain size will have sufficient resources to develop and operate the application chain of Cosmos or Polkadot.

A brief summary is that the next generation of DApps has two types of development technologies, lightweight smart contracts, and heavyweight application chains. Individual or small startup teams will primarily use smart contracts. Large enterprises or entrepreneurial projects with abundant resources will use the application chain.

It is also reasonable to implement DApp with smart contracts first, and then, after obtaining verification and feedback, develop an application chain with better functions and better experience.

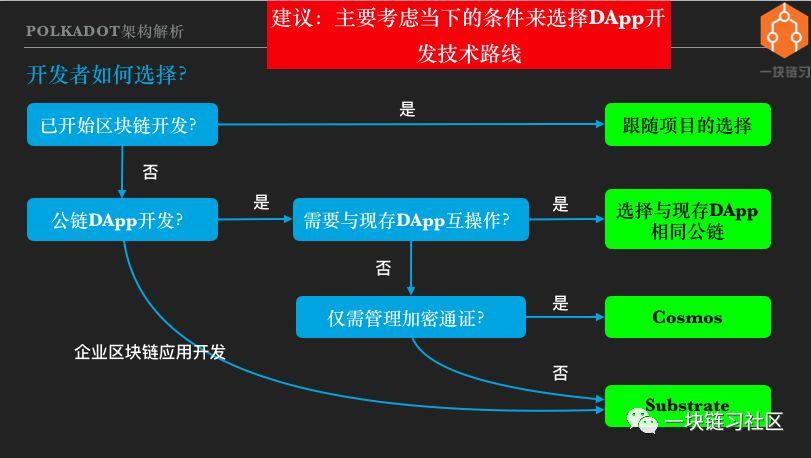

19. How do developers choose?

How do back-end or full-stack engineers choose DApp development technology? I believe that the future blockchain world will be a network of heterogeneous blockchain interconnections. Multiple platforms will have their own living space, and it is not necessary to say who will replace them.

So my suggestion is: mainly consider the current conditions to choose the technical route, such as the ability of the currently available platform, the skills of team members and so on. And don't have to worry too much about the uncertainty of the future.

If the project has already started, continue along the established route. If you haven't started yet, it's first clear whether you're developing a public-chain DApp or developing an enterprise solution. If you are developing a public-chain DApp, do you need to interoperate with an existing DApp. If so, you should choose to develop on the same public chain as the existing DApp.

That is to say, you need an interoperable DApp. In Ethereum, you are developing on Ethereum. On EOS, you are developing on EOS. Someone may ask, is it not possible to cross the chain? Cross-chaining is a very complex technology and certainly not free. Under the premise of meeting the needs, the implementation plan should be as simple as possible. So you can avoid cross-chaining, of course you should avoid it.

So what if you don't need to interoperate with an existing DApp? The question here is whether your DApp will interoperate with future DApps. Or someone else's DApp will interoperate with your DApp.

For example, you develop a pass contract as a company's business points system. If your company's points are used in a wide range, can you trade on a decentralized exchange, can you mortgage the DApp as an asset? A successful DApp should try to integrate into the big ecology of the value Internet. So DApp interoperability is not an option, but a basic requirement of DApp, but it doesn't have to be interoperable with existing DApps.

The next question is: Does the DApp only need to manage the encryption pass? If the answer is yes, Cosmos should be preferred, and if the answer is no, then Substrate should be selected. why? As we mentioned earlier, Cosmos is able to implement cross-chain pass transfer, and Polkadot can implement any form of DApp interoperability.

Some might say that Polkadot is more powerful and flexible. I agree with this, but everyone should understand that the world is fair and there is no free lunch. The price of strong and flexible is complex and high cost.

Cosmos is much simpler in architecture than Polkadot. So I can safely infer that at least in the early days Cosmos would be more reliable than Polkadot and at a lower cost. So if Cosmos meets the needs, you should choose it.

If the business needs are beyond the scope of the encryption pass, you can choose Substrate. In addition, there is a branch in front of it, which is to develop the enterprise blockchain application, or what should be chosen for the development of the alliance chain? I think we should also choose Substrate.

Why not choose Hyperlegder Fabric or Ethereum? Because the technology platform promoted by commercial companies is doomed to compete with the mainstream open platform. As for the development of the alliance chain, I think the flexibility is not enough.

Enterprise business is often complex, with high demands on performance, manageability, etc., and often requires rapid iteration. Substrate is a complete blockchain framework that is highly modular and customizable. The Rust language focuses on security and performance and is also ideal for developing mission-critical systems.

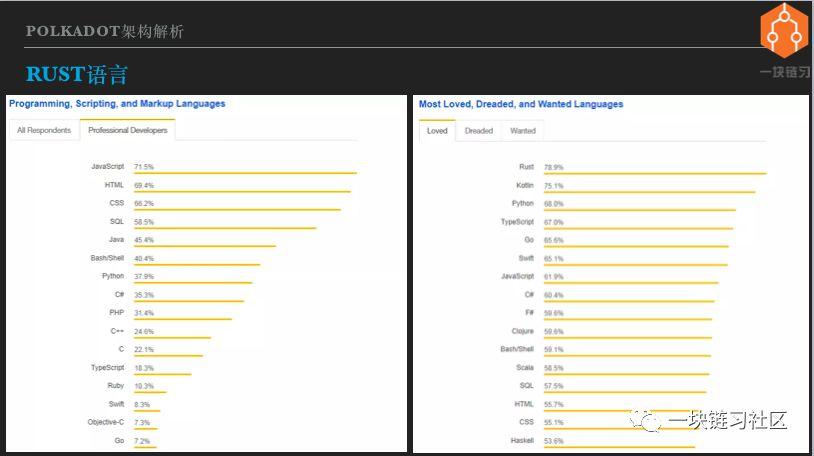

Twenty, Rust language

The problem with the Polkadot/Substrate development that can't be avoided is the Rust language. During my more than 20 years of development, I have met a handful of programmers who can quickly master new languages. But most programmers, including myself, mastering a development language, familiar with standard libraries and development frameworks, take a long time.

So learning a new language is a tough decision for me. A number of development languages have emerged in recent years, of which the Rust language is known for its steep learning curve. Isn't it worth spending a lot of time learning to master the Rust language? As a beginner in the Rust language, let me talk about my own opinion.

The first is that the Rust language is great, but not yet popular. This is not my personal opinion. According to the 2019 Stack Overflow survey, Rust can only rank 21st in terms of popularity, and the left image shows only the top 16 so I can't see Rust.

In addition to the Tiobe programming language index ranking, Rust is currently ranked 34th. Looking at the image on the right, Rust tops the list of the most popular developer language for the Stack Overflow survey. And this is Rust's number one ranking for the fourth consecutive year. Considering that Rust version 1.0 was released in 2015, it can be said that Rust has been the most popular language for programmers since its official launch.

And everyone knows that a programming language, the pursuit of high performance, and the ease of developers, usually can not have both. For example, Java and Python programmers don't need to consider memory management, which of course reduces the burden of learning and development, but the system has to deal with garbage collection, which brings performance problems.

Conversely, c/c++ requires the programmer to manage the memory itself, and the performance can be optimal, but the premise is to write the program correctly, which increases the difficulty of development. But Rust seems to have both fish and bear's paw, which is the same level of performance as c/c++, and can be loved by programmers.

The second view is that the Rust language is suitable for advanced programmers to develop platform-based projects. The Rust language places great emphasis on performance and security. It attempts to guide programmers to write efficient and secure code through language specifications, or compilers. Familiar with Rust's usage, it is natural to develop a high-performance and highly reliable system, which can be called Rust.

Rust believes that there is usually a best way to achieve a goal, and Rust tries to guide or force you to use the best approach at the language level. If you don't code according to Rust, it will let you not compile. Unlike languages such as Javascript, there are always many options. You can do it according to your own habits.

Of course, the quality of the code may vary, and the maintainability is poor.学习并掌握Rust 之道,需要理解一些重要的编程概念,比如对象的所有权等等。没有坚实的开发经验,很难掌握。这也就是大家认为Rust 语言学习曲线陡峭的原因。

如果不是开发对性能和安全要求很高的平台级项目,用Rust 有点杀鸡用牛刀。因为项目在性能和安全上的收益,不一定能抵消采用新开发语言导致的成本。

如果要开发平台级系统或者关键业务系统,Rust 就值得考虑。另外学习Rust 语言可以带动自己加深对内存、线程、异步、闭包、函数式等高级开发概念的理解,对开发能力的提升有莫大好处。

所以简单总结一下,就是如果你有一定的开发经验,未来可能或者有志于开发平台型的系统,当然就包括区块链开发,那么Rust 语言就值得你投入时间去学习掌握。

我用罗素的名言作为本次分享的结束语——须知参差多态,乃幸福本源。真正理解并欣赏Polkadot 和Cosmos 设计理念的人,都不会是最大化主义者,也不会认为Polkadot 的目标是替代以太坊。

至少以太坊基金会和Parity 公司都不这么认为。Parity 公司一直是以太坊生态的重要支柱之一,他们也在积极参与Serenity 开发。

前些时候,以太坊基金会向Parity 公司支付了500 万美元,即是对Parity 多年支持以太坊的感谢,也是资助他们继续开发维护以太坊节点软件。我对比特神教已经见怪不怪了,至少我能理解他们为什么那么想那么说。

但是现在以太坊生态里,也开始出现以太神教的趋向,就有点不可理喻。开放是以太坊愿景的基础,以太坊也带领我们看到了去中心化价值互联网的可能性。所以我认为支持以太坊,但是反对其他区块链,是一种自相矛盾。

前面也谈过,人们对去中心化应用的需求是多样化的。Serenity、Polkadot、Cosmos 和EOS,还有其他DApp 平台公链,都做了不一样的设计选择,或者说是不同的折中。因此他们会非常适用于某些需求,而不太适用于其他需求。

互联互通是大势所趋,任何一个生态如果选择孤立发展,就会被区块链互联网产生巨大的网络效应所挤压,最终被淘汰。因此我们可以期待,区块链的未来会百花齐放,更加参差多态,希望区块链和去中心化应用成为人类的幸福之源。

分享:刘毅,Random Capital 合伙人、清华大学硕士、区块链和大数据技术专家。20 年多种资本市场投资经验,比特币早期投资者。 原文:公众号一块链习社区

We will continue to update Blocking; if you have any questions or suggestions, please contact us!

Was this article helpful?

93 out of 132 found this helpful

Related articles

- Is it a vast galaxy or a black hole? Galaxy Digital's investment loss expanded to $272 million

- DeFi, the current difficulties, the future can be expected!

- The top ten characteristics of the amaranth in the currency circle, how many of you?

- Market Analysis: The market opened the downtrend channel, paying attention to the weekly lineback

- The Consensus Art of Blockchain: The Core Value of Money is Currency Consensus

- Suspected of misappropriation of lock funds? Let DEX make transactions more transparent

- Bitcoin has been "forked" more than 100 times in two years, now how about those forks